AI Plan Review Software Buyer's Guide for Permitting Departments

By Jen Nieto, Government Technology Writer · Updated May 2026

Key takeaways

- •"AI plan review" gets applied to very different tools. Test-fit solutions, permit guides, and code compliance checkers all get marketed under the same label, but only AI plan review software directly reduces review time and correction cycles.

- •Not every jurisdiction needs AI plan review software. The strongest ROI comes when permit volume, staffing constraints, and submission quality issues converge.

- •Most departments start with intake. Improving submission quality before applications enter formal review is typically the lowest-risk, fastest path to value.

- •Platforms should keep reviewers in control. AI should reduce the work around decisions, not make the decisions themselves.

- •Implementation doesn't require replacing existing systems. Most departments start with a standalone deployment and integrate over time.

- •Early results can come quickly. Intake-focused deployments show measurable improvements within weeks. The City and County of Honolulu saw 80% faster permit approvals and a 70% reduction in review cycles within months of going live with CivCheck.

TABLE OF CONTENTS

What is AI plan review software?

AI plan review software is a purpose-built tool that helps permitting departments analyze submitted plans, flag missing information, and give reviewers what they need to make faster, more consistent decisions — without replacing their judgment.

It speeds up the most time-consuming parts of plan review by taking on the work that slows reviewers down:

- Analyzing plan sets and application materials

- Flagging missing documents and information

- Mapping requirements based on project type and context

- Pulling together the specific details a reviewer needs to make an informed decision

Think of it as a co-pilot. It compiles documents, runs calculations, pulls relevant code sections, and points reviewers to the right places in the plan set. From there, the work shifts back to the reviewer. They stay accountable for making the final call.

A well-designed platform can drastically cut review time by reducing manual searches, repeated checks, and back-and-forth over missing information.

When staff use AI plan review, it helps reviewers reach decisions faster without replacing their judgment. They still evaluate intent, handle edge cases, and make the final determination. But they're no longer forced to rely on memory, manual searches, or informal practices that vary from person to person.

Many platforms also extend this capability to applicants. The same underlying technology functions as guided permit preparation, helping applicants understand which documents and information are required and flagging issues before they submit. While the system is the same, the experience for applicants and reviewers is intentionally different, because they're solving different problems.

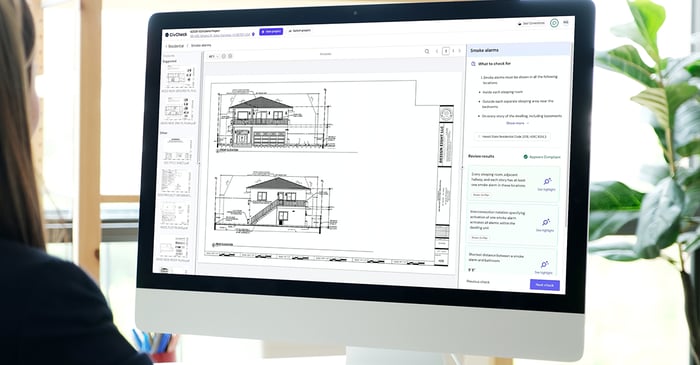

CivCheck, Clariti's AI Guided Plan Review Platform, was designed to provide two genuinely separate experiences—one for the applicant preparing a submission and one for the reviewer evaluating it—instead of giving both sides access to the same interface.

Why Permitting Departments Are Exploring AI

Permitting departments are turning to AI because rising application volumes, staff turnover, and inconsistent review practices are creating backlogs that traditional approaches can't solve.

Permit backlogs aren't a new problem. But the combination of rising application volumes, staff turnover, and inconsistent review practices is pushing more departments to look for a better way to manage the workload.

The challenge isn't only about reducing time-to-issuance; it's about understanding how to address incomplete submissions that are eating up staff time before a plan reviewer ever evaluates code compliance. Review practices vary from person to person, and standardization efforts aren't always applied consistently. When experienced reviewers move on to new roles or retire, the institutional knowledge they carried often goes with them.

AI plan review is gaining traction because it addresses these problems directly. This type of software improves submission quality before applications enter formal review, giving reviewers the information they need to make faster decisions, and helping new staff get up to speed more quickly.

To see what makes AI plan review software different, it helps to compare it to other tools in the market.

The Different Types of AI in Permitting

Not all AI tools in permitting solve the same problem, and using the wrong one won't reduce your backlog. Test-fit tools, permit guides, code compliance checkers, and true AI plan review software all get marketed under the same label, but they serve fundamentally different purposes.

A tool designed to answer "What can be built on this parcel?" solves a different problem than one designed to answer "Is this project ready to be permitted?" Very different tools get grouped under the same "AI plan review" label in the marketplace, and understanding those distinctions makes it easier to avoid investing in something that looks great in a demo but doesn't address the actual cause of your permitting delays.

Test-fit and massing tools

What they do: Help developers and designers answer early-stage questions like: What can be built on this parcel? These tools often take BIM/IFC files and use GIS + parcel data to model zoning envelopes, setbacks, height limits, and other massing constraints.

Good for: Municipalities with significant new development that want to give developers clearer answers about what's buildable and reduce zoning-envelope questions.

Limitations:

- Require clean GIS data and structured building files (BIM/IFC)

- Typically struggle with PDFs

- Minimal to no impact on permit review timelines

- Don't assess permit readiness or validate documentation

The bottom line: These are planning tools, not permitting tools. If permit review timelines or application quality are the problem, a test-fit solution won't move the needle.

Examples: Archistar, UrbanForm

Permit guides and intake tools

What they do: Help applicants understand which permits they need and what's required to apply. They typically ask a series of questions and generate instructions, checklists, and sometimes fee estimates based on the applicant's responses.

Good for: Jurisdictions where applicants pick the wrong permit type, staff are fielding many basic "where do I start?" questions, or intake teams spend time correcting preventable submission errors. Can reduce counter visits, calls, and emails.

Limitations:

- Stop at guidance (they don't analyze plans)

- Can't verify whether the required information appears in submitted documents

- Don't support the detailed plan review checks

The bottom line: Permit guides can improve applicant understanding, but they're not a substitute for plan review support. If low-quality submissions and long review cycles drive delays, a guide alone won't solve the problem.

Examples: Clariti Guide, OpenCounter

Automated code compliance tools

What they do: Built for architects and engineers during the design phase. Many code compliance tools integrate into design software and help check specific parts of a design against select code requirements.

Good for: Helping designers catch errors before submission.

Limitations:

- Usually limited to a subset of codes

- Local amendments usually require customization (often at an added cost)

- Designed around design workflows, not permitting workflows

- Struggle with PDF plan sets

The bottom line: Automated code compliance tools answer "Is this design compliant?" — which is not the question plan reviewers need to answer. Permit documents serve as the legal record, and plan reviewers need to verify that compliance is shown in those documents.

Examples: CodeComply

AI plan review software

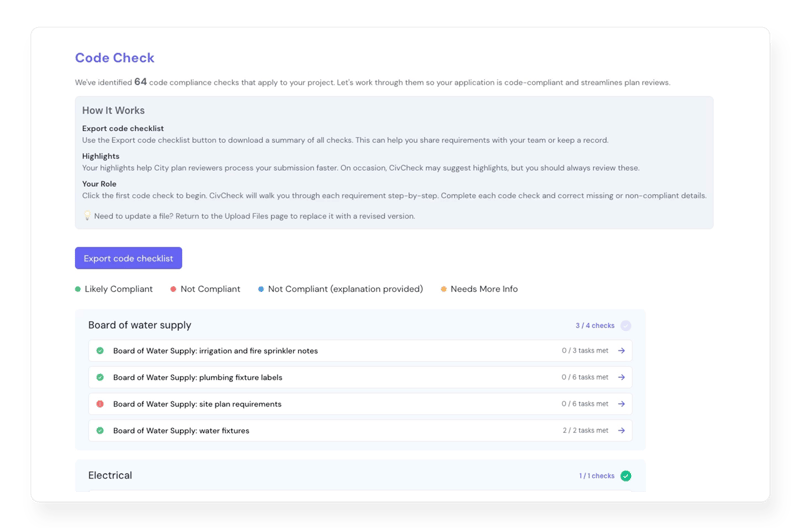

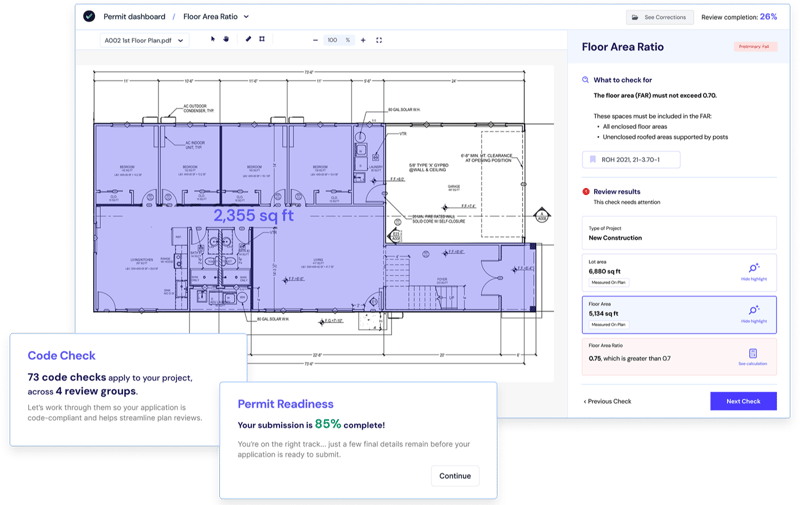

What it does: Designed specifically to support permitting workflows. It analyzes submitted plans and application materials to confirm required documents are present, verify that key information appears in the plan set, and shows where compliance is demonstrated (or where gaps exist). It pulls relevant code sections, performs calculations, and points reviewers to the exact locations in the plans where applicants addressed those requirements.

Good for: Jurisdictions where review capacity can't keep up with application volume, where submission quality is driving correction cycles, or where staff turnover makes it hard to maintain consistency.

Limitations:

- Requires digital submissions (PDF at minimum)

- Value depends on how well the platform reflects a department's review practices, not generic code

The bottom line: This is the category that can directly reduce review time and correction cycles. The most effective platforms act as a co-pilot, handling information gathering so reviewers can focus on decision-making.

Examples: CivCheck

CivCheck supports both intake and detailed plan review within a single platform, so departments can address submission quality and review efficiency together. It's built to work with PDF plan sets so that applicants can upload standard permit documents without reformatting or manual tagging. With 97% code interpretation accuracy and 99% of code book coverage, the platform supports building and planning checks and is configured to reflect local amendments and jurisdiction-specific practices.

At a glance: Comparing AI tools in permitting

| Tool type | Primary purpose | Who it's for | What it helps with | What it doesn't do |

|---|---|---|---|---|

| Test-fit / massing tools | Explore what can be built on a parcel | Developers, planners | Early-stage feasibility, zoning envelopes | Reduce permit review time or validate permit readiness |

| Permit guides | Help applicants understand requirements | Applicants, permit counters | Identifying required permits, documents, and fees | Analyze plans or support detailed plan review |

| Automated code compliance tools | Validate designs during drafting | Architects, engineers | Catching select design issues early | Reflect local review practices or handle PDF-based plan sets well |

| AI permit intake tools | Improve submission quality at intake | Applicants, intake staff | Checking document completeness and basic information | Perform full plan reviews or detailed code analysis |

| AI plan review software | Support permit decision-making | Plan reviewers, departments | Finding required info, verifying compliance, reducing review cycles | Replace professional judgment or automate permit approvals |

What AI Plan Review Software Doesn't Do

AI plan review software reduces review time and improves submission quality, but it has real limits that are worth understanding before you buy.

Even the best AI plan review platforms have limits. Being clear about them upfront helps set realistic expectations and avoids investing in a solution that isn't matched to your organization's problem.

- It won't fix broken processes. If handoffs between departments are unclear or policies are inconsistently applied, AI plan review software won't resolve that on its own.

- It doesn't work with paper-based submissions. Digital submissions (PDF at minimum) are required.

- It doesn't replace reviewer judgment. Final determinations stay with qualified staff.

- It won't deliver results if adoption is low. Like any tool, value depends on how consistently it's used.

Do You Actually Need AI Plan Review Software?

Not every jurisdiction needs AI plan review software. The strongest ROI comes when permit volume, staffing constraints, and submission quality issues converge — and the honest first step is to assess your readiness.

AI plan review is a powerful tool, but its ROI depends heavily on overall readiness, including where your permitting process breaks down and whether the scale of the problem justifies the investment.

| Signs your department may be ready | When it makes sense to wait |

|---|---|

|

|

Common Use Cases (and Where Most Jurisdictions Start)

Most jurisdictions start with permit intake and expand from there. AI plan review use cases follow a predictable progression based on volume, risk, and readiness, and the entry point is almost always the part of the process where incomplete submissions are causing the most delays.

Adoption rarely happens all at once. Use cases tend to expand gradually as teams get comfortable with the technology and start to see where it fits into their existing processes. The industry is always evolving, and use cases tend to follow a predictable progression based on volume, risk, and readiness.

Use case 1: Improving permit intake and submission quality

For many jurisdictions, the highest-impact starting point is permit intake. This phase is also where CivCheck customers usually start.

Incomplete or low-quality submissions create work long before a plan reviewer ever evaluates code compliance, and they're often the root cause of permitting delays that don't get addressed. Staff time gets spent identifying missing documents, requesting basic corrections, and cycling applications back to applicants.

AI-supported intake addresses this by:

- Checking whether applicants included the required documents

- Confirming that key information appears in submitted plans

- Flagging common issues before an application enters formal review

This intake process works for all applicants, from homeowners and small contractors to architects and engineers. Improving submission quality at intake reduces avoidable correction cycles, shortens overall review timelines, and keeps staff focused on the work that requires their expertise.

Because intake focuses on completeness and clarity rather than final approval, it's often the lowest-risk and fastest path to value when introducing AI into the permitting process.

Use case 2: Supporting plan reviewers during detailed review

Once intake is running smoothly, many permitting departments expand into deeper plan review support.

A reviewer opening a residential addition or ADU project sees the relevant code sections, calculations, and plan locations compiled in one place. Instead of flipping through dozens of sheets and cross-referencing multiple code sections, the reviewer can move directly into verification to confirm what is shown, resolve outlier cases, and make the final determination.

This approach is valuable for high-volume permit types:

- Single-family homes

- Accessory dwelling units (ADUs)

- Duplexes and townhomes

- Small commercial projects

As more review groups adopt the platform, the consistency benefits grow. When building, fire, planning, and public works all apply requirements the same way, applicants need fewer corrections at each stage, and applications move through the full process with less rework.

Use case 3: Expanding to additional permit types

As departments gain confidence, adoption typically expands beyond initial residential or intake-focused processes. Common next steps include:

- Residential construction (single-family, ADUs, duplexes, townhomes), where volume is high, and timelines are closely tied to housing delivery goals.

- Commercial interior buildouts (restaurants, tenant improvements, office suites), which are economically significant and often follow repeatable patterns.

- Other high-volume workflows, such as business licenses or public work permits, where backlogs persist, and processes are well-defined.

The most successful implementations treat AI plan review as a platform that grows alongside expanding needs, rather than a solution built around a single permit type.

How AI Plan Review Works

AI plan review software supports both applicants before submission and reviewers during detailed plan review — working in four steps that together reduce correction cycles and speed up permit issuance.

Here's what that looks like in practice.

Step 1: Applicants prepare and pre-check submissions

Before submitting, applicants upload their plans and documents. The platform asks a few questions to determine the project type, then walks them through what's required. It flags missing documents, identifies information that needs to appear on the plans, and highlights where code requirements appear to be unmet. Applicants can address issues before they submit, which means fewer problems once an application enters formal review.

Step 2: Higher-quality applications enter the review queue

Applicants who have been pre-checked arrive at intake with fewer missing documents and clearer information. For intake staff, this means spending less time on basic completeness triage. For plan reviewers, it means spending less time searching for information and more time evaluating compliance and intent.

Step 3: AI supports reviewers during detailed plan review

Reviewers see relevant code sections, calculations, and plan locations compiled in one place for each requirement. Instead of manually cross-referencing codes and flipping through plan sets, they can move directly into verification. Reviewers still make every determination. The software reduces the time spent getting to that point.

Step 4: Review cycles drop

When applicants enter review with clearer, more complete submissions and reviewers are working from the same structured set of requirements, the number of review cycles drops significantly. Permit issuance time decreases, and staff time is redirected to work that requires their expertise.

Implementation Approaches for AI Plan Review Software

AI plan review software can be deployed in two ways: as a standalone system or integrated into existing permitting systems. Most jurisdictions start with standalone because it requires no changes to existing processes.

One of the first concerns jurisdictions raise when evaluating AI plan review software is how it fits into their existing systems. Most departments already rely on multiple tools to manage applications, plans, and communications. AI plan review doesn't require replacing those systems to be useful.

| Standalone deployment | Integrated deployment |

|---|---|

|

|

Many jurisdictions start with standalone deployment, which typically has a shorter implementation timeline than a full integrated rollout. It positions AI plan review as part of applicant preparation, not as an added step inside the formal permitting process. The permitting workflow doesn't become longer. Instead, applicants are guided through the work they were expected to do before submitting, now with a clearer structure and instant feedback.

Common integration points

- Permitting systems (such as Clariti Enterprise and Launch): These integrations allow applicant and project information to flow between systems so data doesn't need to be re-entered manually. This can simplify intake and reduce administrative burden for staff.

- Electronic plan review tools (such as BlueBeam): Reviewers use these to annotate drawings and communicate corrections. CivCheck functions as a side-by-side research and verification tool, guiding reviewers to relevant plan sheets and requirements while corrections are finalized in existing review systems.

How to Evaluate AI Plan Review Vendors

Evaluating AI plan review vendors requires looking past marketing claims to assess accuracy, real-world permitting experience, and whether the platform keeps reviewers in control. As interest in AI grows, more vendors are marketing tools as "AI plan review" — but capabilities, maturity levels, and intended use cases vary widely.

Look past surface-level AI

A common misconception in this market is that a platform that looks "futuristic" or "sleek" must be effective at reducing permitting times. Some platforms lean heavily on conversational interfaces (think ChatGPT) or generalized AI models that look impressive in demos but struggle with accuracy, consistency, or explainability in real workflows.

Vendors should be able to explain clearly:

- What types of AI they use across the platform

- Where AI is applied and where it isn't

- How they measure accuracy for specific checks

Understand augmented intelligence vs. automated decision-making

The difference between augmented and artificial intelligence matters more in permitting than most people realize. A platform built around human-in-the-loop decision-making keeps reviewers accountable and in control. One that automates the decisions and asks for confirmation makes accountability hard to track and defend.

| Augmented intelligence | Automated decision-making |

|---|---|

|

|

One way to test this in a demo is to look for what vendors call verification friction, or the degree to which the platform makes it hard to mindlessly accept AI results. A well-designed system should require reviewers to genuinely engage with each check, not just click through.

Look for platforms explicitly designed with a human-in-the-loop approach, where AI reduces the work around a decision, not the decision itself. These platforms have typically applied ethical AI design as a core requirement of their software, not an afterthought.

Dig into accuracy claims

Accuracy claims can be misleading without context. Two vendors might both claim "faster approvals." One may be referring to speed on a narrow set of automated checks. Another may be referring to overall reductions in permit cycle time. Both statements can be technically true, but they point to different outcomes for your department.

Questions to ask:

- Which checks are supported today, and what portion of your total review do they cover?

- How is accuracy measured for individual checks versus end-to-end outcomes?

- How does the system behave when confidence is low or information is missing?

- Can you share performance data from departments that are currently using the platform, not just pilots or projections?

Account for real-world permitting nuance

Permitting rarely follows a clean, uniform pattern. Local amendments, phased code adoption, additions and alterations, and grandfathered projects all introduce variation in how permits are issued. During evaluation, ask how the platform handles:

- Local code amendments and jurisdiction-specific practices

- Grace periods when multiple code versions are accepted

- Additions, alterations, and existing building conditions

- Multiple ways to demonstrate compliance within plans

Ask vendors the right questions

These are the most important questions to ask plan review vendors before you buy:

- What is the turnaround time for a PDF?

- How quickly can regulatory updates be reflected in the system?

- Can the platform support grace periods during code transitions?

- Has the platform been used in production for additions, alterations, and grandfathered projects?

- How much configuration is required to reflect local amendments? Is there an additional cost for this?

- What level of staff involvement is required during implementation?

- Can you connect us with jurisdictions that have been live for at least six months?

What to look for in AI plan review software

- ✓Fast implementation — weeks, not months

- ✓PDF-native, with no reformatting or manual tagging required

- ✓AI-powered completeness checks

- ✓Guided code compliance for both applicants and staff

- ✓Intelligent plan navigation

- ✓Automatic code-based calculations

- ✓Full digital code library

- ✓Flexible integrations with existing permitting software or plan review tools

Evaluate the partnership, not just the product

AI plan review isn't a set-it-and-forget-it purchase. The strongest results come from vendors that help permitting departments think through process design, documentation, and change management.

Implementation experience in government permitting is just as important as technical capability. Ask about the backgrounds of the implementation team, how they handle onboarding, and what ongoing support looks like once you're live.

CivCheck's implementation team, for example, includes former plan reviewers, licensed ICC professionals, architects, and professionals with 15–20 years of government permitting experience.

Prioritize explainability, consistency, and maturity

Most demos will show that an AI system can do the job, but to validate whether or not it's suitable for government use, check that it meets the following three must-have criteria:

- Explainability: Reviewers can see exactly where an answer came from, including exact sheets and code sections.

- Consistency: The platform applies requirements the same way every time, regardless of who's logged in.

- Maturity: The vendor tested and proved the platform works in real permitting environments, not just in demos.

This is why it's important to ask vendors how long they've been in production, how many jurisdictions are actively using the platform, and how they handle exceptions.

Pricing, ROI, and Making the Business Case

AI plan review software is typically priced based on permit volume, project type, and scope of functionality, with modular models that let jurisdictions start small and expand over time.

How pricing is typically structured

Most AI plan review software is priced based on a combination of:

- Annual permit volume

- Project type or complexity (residential vs. commercial)

- Scope of functionality (intake checks only versus full plan review and code compliance)

Modular pricing models give jurisdictions more flexibility. Teams can start with a limited scope, such as intake or a single permit type, and expand over time without committing to a full rollout from day one.

CivCheck uses a modular pricing structure so departments can adopt intake and plan review capabilities separately and expand as needed. Pricing is typically tiered by permit volume range rather than exact counts, which helps with budget predictability during procurement.

How to think about ROI

The return on investment for AI plan review is usually tied to operational efficiency, economic impact, and workforce sustainability, not just direct cost reduction:

- Increased throughput. When staff can process more applications in the same amount of time, backlogs shrink, and projects move forward faster. Permit fees are collected sooner, development projects reach construction faster, and the taxable value of permitted properties hits the rolls earlier.

- Reduced staff time per application. Less time spent searching for information, verifying completeness, and issuing repeat corrections means staff time is redirected to substantive review.

- Fewer correction cycles. Standardized application of requirements reduces rework for both staff and applicants.

- Workforce sustainability. For jurisdictions facing retirements and hiring challenges, AI plan review helps maintain capacity without scaling headcount, and gives new staff a path to building expertise.

Calculate the estimated staff hours and labor costs AI plan review software could save you annually using this ROI calculator.

Building a credible business case

The more specific your baseline, the easier it is to defend the investment and measure results once the system is live. Compile the following information to help make the internal business case:

- Permit volumes by type

- Average review time

- Number of correction cycles per application

- Staffing levels and vacancies

- Known bottlenecks in intake or review

Rather than projecting improvements across every permit type, focus initial proposals on one high-volume permit category or on intake. Early wins build confidence and create the momentum needed for future expansion.

How long does it take to see results?

Time to value depends on scope, but early adopters of AI plan review software are learning that intake-focused deployments show measurable improvements within weeks of going live. More complicated deployments across multiple departments take longer to configure, but usually deliver compounding benefits as staff adoption increases and workflows stabilize.

In the City and County of Honolulu, CivCheck reduced permit review cycles by 58%, corrections per permit by 67%, and time to permit decision by 55%. Before CivCheck, permits averaged 23.5 corrections per application. With CivCheck, that dropped to 7.7.

Funding, Buy-In, and Procurement

Securing approval for AI plan review software typically involves three steps: building internal buy-in, preparing a funding proposal, and navigating procurement. The departments that succeed start by framing the conversation around the operational problem, not the technology.

Building internal buy-in

Gaining support for AI plan review often starts with defining the problem it's meant to solve. Framing the conversation around operational challenges—such as growing backlogs, inconsistent reviews, or staff capacity constraints—helps stakeholders see the technology as a useful tool.

Who to involve early:

- Plan reviewers and intake staff are the most important voices in the room. They're closest to the work and can pinpoint where delays and rework actually happen. Early involvement also helps address concerns about how AI will affect review responsibilities.

- IT needs to understand how the platform handles data, what integration points exist within your permitting system, and the security requirements. Bringing IT in early avoids last-minute surprises that can delay go-live by weeks.

- Department leadership and elected officials are often catalysts for progress, especially when permitting performance is tied to housing delivery or economic development goals. Connecting faster, more consistent permitting to outcomes leadership already cares about makes the case easier to carry up the chain.

- Finance and procurement help translate operational impact into budget and purchasing terms. They'll want to understand the total cost of ownership, available funding vehicles, and what the procurement process looks like.

Preparing a funding proposal

Funding proposals share some of the same data as an internal business case, but the focus is on financial specifics. Useful inputs to include:

- A detailed cost breakdown from your preferred vendor (software, implementation, and ongoing costs)

- Your proposed budget, including a contingency for scope changes

- Expected ROI (which you can estimate with this AI plan review ROI calculator) tied to specific, measurable goals

- A timeline from purchase through implementation

- Available funding vehicles (grants, general fund, capital improvement budget, or federal/state programs)

Navigating procurement

After budget approval, you may or may not have to go through a formal procurement process. If you do, expect the timeline to range from a few months for a simple process to six months or longer for a full public RFP cycle.

Before issuing an RFP or RFQ, document how plan review is performed today, including local amendments, review group handoffs, and acceptable ways to demonstrate compliance. The more clearly these realities are reflected in procurement documents, the easier it is to evaluate vendor responses against your needs.

Options for streamlining procurement include:

- Cooperative contracts or buying groups can reduce timelines significantly

- Direct purchase may be possible if your jurisdiction's purchasing rules allow it under a certain threshold

- Invite-only RFP limits the number of proposals if you've already narrowed your options

- Pilot or voluntary rollout before full deployment reduces risk and can simplify the approval process

Planning for long-term success

Long-term success with AI plan review depends less on the software itself and more on treating it as part of a broader process improvement that includes technology, process, and people.

AI plan review software needs to adapt to changing codes, policies, and workloads. Choosing a platform that supports ongoing configuration through experienced implementation partners helps ensure the investment continues to deliver value over time.

The most successful projects treat AI plan review as part of a broader permitting process improvement that includes technology, process, and people. The fundamentals haven't changed: start with a clear problem, adopt in phases, and find a partner who understands your operations as well as they understand their own technology.

Ready to see how CivCheck can cut review times and reduce correction cycles in your jurisdiction? Contact our team to schedule a walkthrough. We'll help you determine whether AI plan review is the right fit, where to start, and what results are realistic for your specific situation.

Frequently Asked Questions

How accurate is AI plan review software?

Accuracy varies by platform and check type. Real-world engagements give the clearest picture: in Calgary, CivCheck achieved 93% accuracy on zoning compliance checks and 94% on document completeness across 30 bylaw regulations. In Seattle, CivCheck achieved 92% accuracy against human reviewer decisions across more than 1,200 checks. When evaluating vendors, ask for performance data from live deployments or structured engagements — not just demos — and ask how accuracy is measured for specific check types.

Does AI replace plan reviewers?

No. AI plan review is designed to support reviewers, never replace them. Reviewers still evaluate intent, handle rare cases, and make every final determination. The software reduces time spent on information gathering so reviewers can focus on the decisions that require their expertise.

How long does AI plan review implementation take?

Intake-focused deployments can go live in weeks. More complex rollouts across multiple departments and permit types take longer, but most jurisdictions see measurable results within the first few months.

Can AI plan review software handle local amendments?

It depends on the platform. Some require custom development to reflect local amendments, which adds cost and time. Others use configuration-based approaches that can be updated more quickly. Ask vendors specifically how they handle local amendments and whether there's an additional cost.

Is AI plan review software safe for government use?

Yes, when the platform is built with transparency and human oversight in mind. Ask vendors whether reviewers can see where every answer came from, whether the platform applies requirements consistently, and whether final decisions stay with qualified staff. A conversation with the vendor about how they handle exceptions will tell you a lot about their platform's safety.

What does AI plan review software cost?

Most platforms price based on permit volume, project type, and scope of functionality. Costs vary, but modular pricing models allow jurisdictions to start with intake or a single permit type and expand over time. For budget planning purposes, ask vendors for tiered pricing ranges rather than exact per-permit costs.